The big news - The AI Act is coming!

Shortly before the delegates from European Parliament, Council and Commission gathered for the last technical trilogue meeting on Monday, 22 January 2024, the final draft was leaked on social media (see the consolidated, easy-to-read version here as well as a comprehensive compare version here).

Whilst there has been speculation whether the two heavyweights France and Germany could still hinder the AI Act, particularly due to the new rules for general-purpose AI models, any such last ditch attempt for further changes are extremely unlikely. Anybody, including the French and German Governments are aware that any surprise move would cause significant, highly undesirable political damage to this banner project of the European Union.

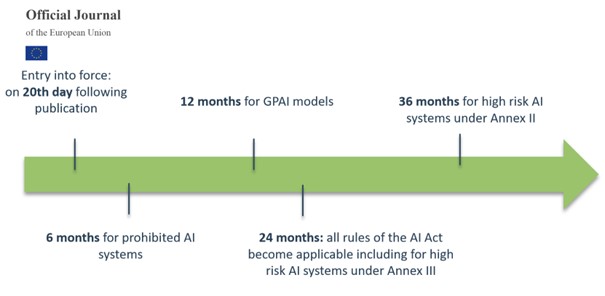

The European Parliament has already scheduled meetings of the Committees on Civil Liberties, Justice and Home Affairs and Internal Market on 14 and 15 February 2024 in preparation for the plenary vote. This will be followed by a final legal-linguistic revision by both co-legislators. The formal adoption by Council and Parliament is expected at the end of February or the beginning of March. Following its publication it can be expected that the AI Act as now drafted will enter into force before the summer break. Some provisions of the AI Act (for certain high risk systems) will become mandatory already 6 months later, ie well before the end of the year. Most other provision will then gradually be applied in the course of 2025 and 2026 – as shown for the most important aspects in this graphic:

With that timeline in mind, it is clear that all stakeholders concerned need to start or intensify organizational preparations.

1. The AI Act's core concepts

The final wording of the AI Act substantially follows the political agreement reached on 8 December 2023, which we cover in more detail here with a more recent analysis here.

The AI Act is built on a number of core concepts, relevant for its understanding and application. Given their importance, we will briefly re-state the four most important concepts:

1.1. Scope of definition of AI systems

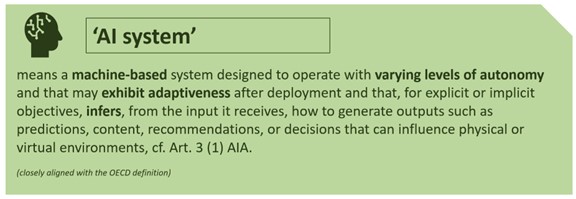

What does it take for a system to be (artificially) intelligent? Given the overriding importance of this question for the AI Act’s entire scope of application, the EU institutions hotly debated this question right until the end of the legislative process. Eventually, the EU decided to define AI very broadly and to align it with the definition used by the OECD (in the context of the OECD’s non-binding policy framework and guidelines for ethical and responsible use of AI). This definition is exceptionally broad and all-encompassing:

In order to exclude linear algorithms, i.e., traditional computer programs, Recital 6 of the AI Act clarifies that AI systems should not include rules-based systems. Whilst this may give some reassurance against an overly broad application, it has to be observed that it is not actually required that any such system is self-learning – as it wording suggest that such systems (only) “may exhibit some adaptiveness”.

1.2. Territorial scope

The AI Act and the “Coordinated Plan on AI” are part of the efforts of the European Union to be a global leader in the promotion of trustworthy AI at international level. The AI Act therefore seeks to regulate every system that affects people in the EU – directly or indirectly. It therefore extends extraterritorial application, such as to providers in countries outside the EU that place AI systems or general-purpose AI models on the market in the EU, or that put into service AI systems in the EU. It further applies to providers or deployers of AI systems outside the EU where the output produced by the AI system is used within the EU. In graphical terms, this looks as follows:

-

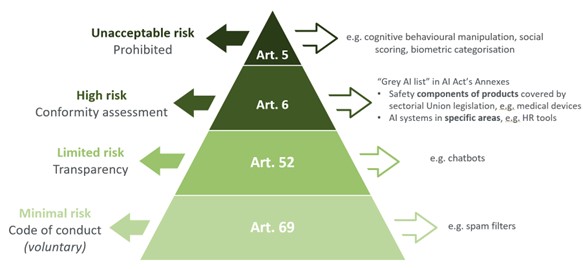

1.3. Risk-based approach for AI system classification

Arguably the AI Act’s most important concept is the risk-based approach with four levels of risk for AI systems, supplemented by specific rules for general-purpose AI models.

AI systems with unacceptable risks will be prohibited within the EU six months after entry into force. These systems include the following (with specific exceptions):

- deploying subliminal, manipulative, or deceptive techniques to materially distort behavior and impair informed decision-making, causing significant harm;

- exploiting vulnerabilities related to age, disability, or socio-economic circumstances to martially distort behavior, (reasonably likely) causing significant harm;

- biometric categorization systems inferring sensitive attributes;

- social scoring, i.e., evaluating or classifying individuals or groups based on social behavior or personal characteristics, causing detrimental or unfavorable treatment of those people;

- assessing the risk of an individual committing criminal offenses solely based on profiling or personality traits and characteristics;

- compiling facial recognition databases, through untargeted scraping;

- inferring emotions in workplaces or educational institutions, except of AI systems for medical and safety reasons;

- 'real-time' remote biometric identification (RBI) in publicly accessible spaces for law enforcement (unless the use is strictly necessary in certain defined scenarios).

For the next level of so-called high-risk AI systems, the AI Act establishes detailed and comprehensive obligations and requirements that predominantly apply to providers and deployers of those systems. These obligations address a wide range of AI governance measures and technical interventions (including transparency, risk management, accountability, data governance, human oversight, accuracy, robustness and cybersecurity) that need to be implemented during the design and development stages, and be monitored and maintained throughout the AI lifecycle. There are two main groups of high-risk AI systems:

- “Annex II systems”, i.e. systems intended to be used as a safety component of a product or which is itself a product covered by EU laws in Annex II and required to undergo a conformity assessment; these are typically AI systems used in the context with risk-prone, highly regulated products.

- “Annex III systems”, i.e. AI systems intended to serve a special purpose listed in Annex III, which are (with specific new exceptions introduced under the compromise text for systems for which do not pose any significant of harm, to health, safety or fundamental right):

- non-banned biometrics;

- critical infrastructure;

- education and vocational training, including systems to determine access or admission, evaluate learning outcomes, monitor and detect prohibited behavior during tests;

- employment, workers management and access to self-employment, including systems for recruitment, selection, monitoring, termination or promotion;

- access to and enjoyment of essential public and private services, including credit scoring, and pricing in health and life insurance.

- law enforcement;

- migration, asylum and border control management;

- administration of justice and democratic processes, including election-influencing AI, e.g. recommender algorithms on social media.

While there had been endeavors to update and further define the scope of high-risk AI systems in the compromise version of the AI Act, and certain relatively narrow exemptions had been introduced to limit the broad scope, there is remaining uncertainty on certain types of classifications and companies are well advised to apply an appropriate level of governance for any AI system in use. The EU Commission is tasked to provide, no later than 18 months after entry into force, further guidelines specifying the practical implementation of the classification of AI systems as high-risk.

On the lower end of the risk scale, certain limited risk AI systems need to comply with minimal transparency and/or marking requirements. The transparency obligations primarily address AI systems intended to directly interact with natural persons. In addition, the compromise text requires providers of AI systems generating synthetic audio, image or text content to ensure that outputs of AI systems are marked in a machine-readable format and are detectable as artificially generated or manipulated. These providers shall further ensure effectiveness, interoperability, robustness and reliability of their technical solutions. Further transparency and marking obligations exist for deployers of certain AI systems (enabling emotion recognition or biometric categorization, deep fakes, or manipulation of text of public interests). Other AI systems with minimal risks may be covered by future voluntary code of conducts to be established under the AI Act.

1.4 Specific rules for general-purpose AI

The risk-based classification of AI systems is supplemented by rules for general-purpose AI models (GPAI), which were introduced into the text last year in response to the rise of foundation models, such as large language models ("LLMs"). The specific obligations for these models can be grouped into the following four categories – with additional obligations for such general purpose AI models that entail systemic risks: drawing up and keeping up to date technical documentation of the model, including training and testing process and evaluation results; providing transparency to downstream system providers looking to integrate the model into their own AI system; putting in place a policy for compliance with copyright law and publishing a detailed summary of the training data used in the model’s development.

GPAI providers with systemic risk (which will be assessed based on technical thresholds indicating high impact capabilities that will undergo further evolvement in the future) must in addition perform model evaluations, implement risk assessment and mitigation measures, maintain incident response and reporting procedures, and ensure adequate level of cybersecurity protection. The EU Commission may adopt delegated acts to amend the thresholds.

1.5 The new AI Office of the EU and its authority

Member States hold a key role in the application and enforcement of the AI Act. In this respect, each Member State must designate one or more national competent authorities to supervise the application and implementation of the AI Act, as well as carry out market surveillance activities.

To increase efficiency and to set an official point of contact with the public and other counterparts, each Member State is expected to further designate one national representative, who will represent the country in the to be established European Artificial Intelligence Board.

In addition, the Commission will establish a new European AI Office, within the Commission, which will supervise general-purpose AI models as well as cooperate with the European Artificial Intelligence Board and be supported by a scientific panel of independent experts.

The new entity will be primarily responsible for supervising compliance by providers of GPAI systems, while also playing a supporting role in other aspects such as the enforcement of the rules on AI systems by national authorities.

1.6 Penalties and enforcement

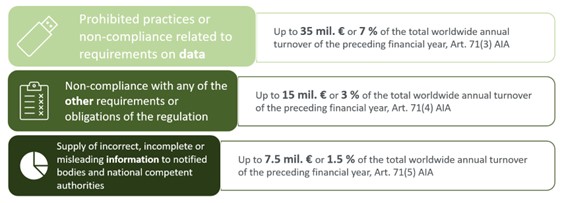

The AI Act comes along with drastic enforcement powers and potential high penalties. Penalties must be effective, proportionate and dissuasive, and can have a significant impact on businesses. They range from €7.5 to €35m or 1,5 % to 7% of the global annual turnover (whichever is higher – in the case of SMEs including start-ups whichever is lower), depending on the severity of the infringement:

AI Act's impact on businesses

The AI Act provides for a wide range of governance and compliance obligations imposed under the AI Act, which go beyond addressing merely providers of high risk AI systems but potentially apply to the whole spectrum of actors involved in the operation, distribution or use of any AI system (including providers, importers, distributors and deployers of AI system).

To ensure and be able to demonstrate compliance with the future obligations under the AI Act, and to avoid risks and liabilities, it is essential for organisations to start evaluating the impact that the AI Act will have on its operations. The AI Act forms one component to be included in the AI governance program to be implemented by basically every business, and requires to verify, adapt, and implement appropriate standards, policies and procedures for an appropriate use of AI technology. Developing an adequate AI strategy and building a compliance, governance and oversight program, which effectively interacts with other business strategies and objectives (including risk management, privacy, data governance, intellectual property, security and many others), will become one of the biggest challenges for businesses in the digital economy.

We will address the steps to be taken by businesses in light of the requirements under the AI Act in Part 2 our AI Act impact analysis.

Next steps and global context

At the international level, the EU institutions will continue to work with multinational organizations, including the Council of Europe (Committee on Artificial Intelligence), the EU-US Trade and Technology Council (TTC), the G7 (Code of Conduct on Artificial Intelligence), the OECD (Recommendation on AI), the G20 (AI Principles), and the UN (AI Advisory Body), to promote the development and adoption of rules beyond the EU that are aligned with the requirements of the AI Act.

In addition, the EU Commission is initiating the so-called “AI Pact”, which seeks voluntary commitments from industry to start implementing the requirements of the AI Act ahead of the legal deadline. Interested parties will meet in the first half of 2024 to gather ideas and best practices that could inspire future pledges (declarations of engagement). Following the formal adoption of the AI Act, the AI Pact will be officially launched and "frontrunner" organizations will be invited to make their first pledges public.

Authored by David Bamberg, Martin Pflueger, Nicole Saurin, Stefan Schuppert, Jasper Siems, Dan Whitehead, Eduardo Ustaran and Leopold von Gerlach.

_________________________________________________________

Our contacts in your jurisdiction for these issues, are on Hogan Lovells AI Hub contacts section.